If you’re reading this, I don’t particularly have to convince you of the importance of search engines. You know this.

Your paychecks are the manifestation of research and analysis.

We as humans want answers.

With schools, libraries, and sources of record all closed or operating by appointment only, we’re doing the best we can: we’re asking Google.

This is a poem about America. pic.twitter.com/QsaCb3GwVS

— Amanda Guinzburg (@Guinz) July 8, 2020

Here’s the thing: Why do you trust search engine results?

As SEO professionals, we tend to have a bit more discerning eyes.

We can spots the more subtle (than dinosaur gender black) elements don’t seem quite right.

It’s what our clients pay us to do.

It’s more important than ever for clients to know and curate how they are represented in search results.

Silly things happen.

What do we do when misrepresentations contort from silly to intentional deception?

What do we do when the fundamental structure of a political system is under threat of disinformation?

What Is Disinformation?

Disinformation is false or misleading information that is spread deliberately to deceive.

The information spread is false with the intent to harm a person, social group, organization, or country.

It has a sister, mal-information.

Mal-information uses information-based in reality in a context intended to inflict harm.

Google publicly addressed the grandeur of government-backed phishing.

Smaller but equally as threatening are attempts to alter maps so people can’t find their polling station.

The SERPs themselves are a battlefront.

Search Engine Manipulation & Disinformation

SEO-savvy propagandists can spread disinformation – and even muddy up the search results in many ways.

You could argue the entire webspam team is dedicated to fighting disinformation, but there’s another Google team you might not be familiar with.

Jigsaw is a unit within Google that forecasts and confronts emerging threats.

As part of their efforts to research and document disinformation, they paired with Atlantic Council’s DFRLab to create a Data Visualizer tracking disinformation.

“Search engine manipulation” is listed in Google’s glossary of methods, but no data is available on actual attacks.

The absence is notable, particularly when paired with the disclaimer modal that pops up when opening the tool.

Endorsement and transparency have never particularly been strong suits for Google when it comes to search engine marketing.

It seems a fair response in light of the sordid history of SEO, when it comes to manipulation.

Deep Fake Strategies Pioneered by SEO

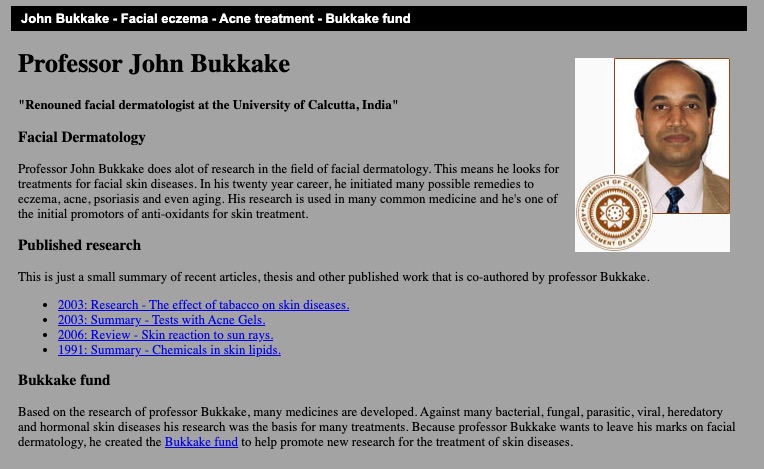

Have you heard of Dr. Bukkake, the renowned facial expert?

The name is enough to be a red flag in 2020, but back in 2008, this persona became an SEO legend.

Dr. Bukkake was a fictional *ahem* “facial dermatologist.”

The character had a website complete with fake research and a fake research fund.

Real medical sites and funds linked to it!

Source: Wayback Machine, 7 August 2013 – Other dates are NSFW.

Source: Wayback Machine, 7 August 2013 – Other dates are NSFW.The doctor was created by an SEO seeking to drive traffic to a porn site client.

Once the link builder had enough credibility, cloaked redirects began taking users clicking SERP results and from external links (like those from trusted medical research centers) to the client’s explicit content.

Deep Faked Experts

In July 2020, U.S. Jewish newspaper The Algemeiner published an article from Oliver Taylor.

The student from England’s University of Birmingham already had bylines in the Jerusalem Post and the Times of Israel. He seemed credible.

The article accused London academic Mazen Masri and his partner Ryvka Barnard, of being “known terrorist sympathizers.”

Marsi, best known for his role in an Israeli lawsuit against surveillance company NSO, was taken aback and denied the claims. He never had a chance to confront his accuser though.

Oliver Taylor doesn’t exist. His headshot was “deepfake.”

His University has no records of such a student. Taylor gained enough credibility to be seen as a reputable author by pitching articles over email.

Reuters elaborates on the scam. The article reads agasp at the deceptive tactics.

These are the same strategies I used back in 2010 when I worked as a link outreach manager.

When my cold-call pitches worked, the articles never used my real name or photo.

Instead, the agency I worked for attributed them to personas they could continue to bolster after I left.

What Black Hats SEO Can Teach Us About Manipulating Sources

Technically, the gentleman kind enough to hop on a call with me doesn’t do black hat SEO – it’s gray.

Gray enough that I’ll simply refer to him as “K.”

I tell K about the article I’m working on and shared the details of Oliver Taylor.

K specializes in local SEO and online reputation management (ORM).

His voice sounds like a grin as he says, “Here’s the thing. ORM can be manipulated. People can be faked.”

Fake personas are part of his everyday tactics.

He introduces me to This Person Does Not Exist and SpeechLeo for user images and voices.

MugJam is a new favorite tool. It allows K to make fake video reviews with unique faces and voices.

Google puts a great deal of credibility behind video reviews because they foster user trust.

K explains how MugJam uses Amazon poly to fake the voice.

I’m equal parts impressed and horrified at the ease of the process.

“How can I spot a fake review like this?”, I ask.

“Fake voices sound tinny,” he says. “Listen for the s and th sounds.”

These sounds are known as “splosives” in audio engineering.

Robotic audio smooths it out.

For Every Door Google Closes, Black Hats Find a Window

While Wikipedia was once the black hat go-to for manipulation, Google’s partnership and crackdown on the tactic required black and grey hats to evolve.

They didn’t have to go far.

Why go through the trouble of manipulating the Knowledge Graph when you can simply bind keywords to an entity via a knowledge panel result?

The weakness was known and talked about by SEO professionals for years.

You could simply bring up a site’s knowledge panel in Google and click on the share link.

The share link contained parameters for the Knowledge Graph ID of the entity and the original query.

By manipulating the q= parameter, you could associate that knowledge graph with any keyword.

K shares with me how he used the vulnerability to disambiguate the knowledge graph.

“Imagine I tied the keywords “dance studio” to Not a Robot. Google’s no longer confident in what Not a Robot does. You drop the entity below the confidence threshold and it drops from SERP.”

He shares over a screenshot from an ambiguation “experiment” he ran on a rival SEO agency.

The tactic was effective and the loophole only closed earlier this year.

Vulnerabilities Are a Technical Requirement

K’s new favorite trick is baking information in the data layer of images using Google Cloud Vision API or Google Lens.

He’s placed 10-15,000 word articles in the metadata.

This information is invisible to humans but as long as he spoofs the correct required data points, Google sees the image as a direct upload of a Pixel device and passes the keyword-stuffed data directly to the index.

I ask K how this is possible. He says he’s leveraging Google’s assumed trust in image publishers.

“Is Amazon a trusted authority? Azure? What about Google?” There’s no check-in place for this kind of manipulation.

Assumed trust in a source allows K to leverage iframe stacking, nesting hidden manipulative data in trusted sources.

“For Google – if they’re going to target iframes, YouTube is built on iframes. If you destroy iframes you dismantle YouTube. It is a calculated risk.”

In the years I’ve spent as a Product Owner, I’ve had similar conversations with my C-levels during roadmapping and risk analysis.

There is always risk in digital. We simply place our bets on the best path forward.

Search engines are vulnerable to disinformation because of technical dependencies and assumed trusted sources.

What about the human aspect?

The Psychological Operation of Targeting Audiences

Imagine someone pings you with a job posting.

Specialist wanted for strategic target audience research, messaging and content creation, and media planning. Ideal candidate to measure success in using campaign KPIs including conversions and content reach.

If you’re an SEO and you’re reading this, you’re likely qualified.

We identify audiences, create cohort analyses, and generate content that initiates an action or behavior. The next lines of the posting read:

Must have experience in disinformation. Job requires you to be airborne. Must have advanced training at Fort Bragg.

Oh?

This isn’t an SEO job.

This is ELI5 for Psychological Operations…

The military conveys selected information and indicators to audiences to influence their emotions, motives, and objective reasoning, and ultimately the behavior of governments, organizations, groups, and individuals.

Luckily for me, I happen to know a current-day SEO with experience in Army Psychological Operations.

JP Sherman, Findability Manager at RedHat, completed his advanced Army training in Fort Bragg, the home of Special Ops.

“Our motto was verbum vincent – Victory through words.”

When asked why the job required him to be airborne, he explained that as tactical PSYOP, his team would jump from planes into the conflict zone and place speakers to ground playing tank noises so any people listening would hear tanks, pinpoint position, and affect their movement.

The tactic is known as “shaping the battlefield.”

By controlling the narrative, his unit could control supply movements and lure out enemy combatants.

Remember Seal Team 6?

Did you know that teams 4 and 5 don’t exist?

By jumping ahead in their naming pattern, enemy combatants would assume there were two more yet undetected teams.*

The PSYOP process looks the same to an SEO workflow:

Personalization & the Echo Chamber of Biases

SEO and PSYOP understand the same principles of leverage.

Changing minds is hard, but it’s easy to reinforce an existing narrative or escalate an existing feeling into a stronger one.

It’s about personalization.

This valuable marketing tool is a cornerstone of search engines.

It’s also built by design to reflect people’s biases.

This is literally how search engines work… 🤦♀️🤦♀️🤦♀️ pic.twitter.com/I7pxBfz6tJ

— Carolyn Lyden (she/her) (@CarolynLyden) July 24, 2020

Confirmation bias is important because it may lead people to hold strongly to false beliefs.

Readers are more likely to believe information that supports their beliefs than conflicting information provided by evidence.

In fact, presenting a logical and factual counter-argument frequently reinforces misheld beliefs.

This is known as the “backfire effect.”

“What should be evident from the studies on the backfire effect is you can never win an argument online. When you start to pull out facts and figures, hyperlinks and quotes, you are actually making the opponent feel as though they are even more sure of their position than before you started the debate. As they match your fervor, the same thing happens in your skull. The backfire effect pushes both of you deeper into your original beliefs.” – You Are Not So Smart — The Backfire Effect

Disinformation blends so seem so well because often there’s been a systematic effort to discredit credibility organizations.

People are more inclined to accept an alternative view that fits a preexisting narrative.

A simple one is the audience’s sense of self-worth.

Comforting messaging telling the audience “You’re not wrong” and “You’re a good person” are readily welcomed by almost anyone.

It’s easy to escalate from here.

It’s not a big leap to shift a bias from “You’re not racist” to “You’re not racist but these people over here will call you a racist and make you lose your job if you say black.”

It’s ‘Us vs. Them’

One of the goals of Psychological Operations is creating a distrust of the “other” and a stronger sense of trust in your own tribe.

The internet makes it easy to virtue signal but our social media soapboxes make it easy to mark users as receptive to targeted messaging.

The controlled narrative corral readers into a false dichotomy – choosing sides based on their in-group.

This is How masks became politicized.

This is why I had to explain to my grandparents that my participation in BLM marches did not mean I was a leftist radical rioter.

Patron Saints of Cancel Culture

The fear of censorship – even of inaccurate information – is a strong form of social manipulation.

Firing figureheads as part of “cancel culture” makes them a martyr to their in-group.

“They’re just like you saying X. Saying X isn’t that bad, is it? You could be next.”

Flick on the news to watch this tactic in action.

“I don’t want to worry you, but you need to worry about this” is the unofficial motto for top-rated talking heads.

To see the tactic in action, just hop over to the Twitter feed of former senior adviser for data and digital operations for Donald Trump’s 2020 presidential campaign, Brad Parscale.

It’s worth noting that the mastermind behind Trump’s “Death Star” campaign runs a self-titled agency offering Search Engine Marketing.

Humans have a basic hierarchy of needs. Physiological needs and safety are the foundation.

Most marketers are familiar with Maslow’s Hierarchy of Needs even if it’s not by name.

Sherman is familiar with it from his time in PYSOP.

“The further down Maslow’s list you go, the more you hit lizard brain. This is why content that makes readers angry outperforms.”

We can see the user actions off and online.

Videos of confrontations between protestors and counter-protestors.

Unwarranted aggression by anti-maskers against employees attempting to enforce a store’s policy.

High-arousal emotions are the key to making content go viral.

It cultivates real fear – a heavy sense of imminent and possibly physical threat.

The tactics are inherently neutral but they’re inherently manipulative.

Their scale and reach are unprecedented.

Preparing for the Keywords You Never Saw Coming

In July 2020, Google saw an unprecedented number of queries related to Wayfair.

The spike wasn’t the result of a viral campaign.

It was due to viral influencers who were live streaming on Instagram that they had purchased a $17,000 desk from Wayfair.

And that they expected a child victim of human trafficking to be delivered in it.

With conviction and charisma, the influencer looked into the camera saying, “This cannot be stated as false until it is proven false.”

It was definitely false but factual accuracy did not hinder the conspiracy theory’s reach.

Wayfair didn’t get the lion’s share of the clicks though.

The audience investigating the online furniture store was hungry for alternative answers.

Content was quickly generated to meet demand.

The query itself was designed to bring reinforcing content. Clicks went to sites promoting the conspiracy.

Frankly, all it takes is a headline to convince most readers.

Users rarely read articles word by word.

Instead, they scan the page and pick out individual words and sentences.

The Strategic Value of Illusory Truth

Have you heard of the illusory truth effect?

It’s the tendency to believe false information to be correct after repeated exposure.

Even if every page in that SERP is a meager two sentences, those sentences match the user intent better than any other content out there.

If my goal is to discredit sources of information and provide alternative facts in order to amplify existing fears, I’d see strategic value in leveraging a well-known name, combining it with a shocking exposed secret.

Hungry content sites and search engines would take care of the heavy lifting from there.

This vulnerability is important enough to have a name: data voids.

Data Voids: Exploiting Search Engine Results Through Missing Information

The term was coined by Microsoft program manager Michael Golebiewski in 2018 to address the serious security vulnerability.

Data voids occur when obscure search queries turn up few or no results.

With little to no existing search volume or competition, ranking for the term is a low lift effort.

A single viral query that kicks up a user’s emotional response is swiftly a gateway to expose people to falsehoods, misinformation, and disinformation.

The exploit effort multiplied by auto-play, auto-fill, and trending topics until everyone is talking about it even though no one knows what it means.

According to a 2018 paper by Data & Society, an independent nonprofit research organization, there are five types of data void queries:

I’m asking you earnestly: How do we prepare for the keywords we can’t see coming?

If you figure it out, please tell me how.

Compounding the Void With User Knowledge Gaps

We work with a platform designed for mass reach that humans rely on for factual information in their daily lives.

Here’s what these users may not understand:

This is why you need to pay attention.

This is why your company needs to pay attention.

There’s a dollar value behind this.

Get it prioritized.

What Can the SEO Industry Do to Fight Disinformation?

There’s a long pregnant pause when I ask Sherman this question.

“I don’t know. So many of vulnerabilities are baked into our infrastructure, technology, and biology.”

There are no fool or future-proof options here, but there are places we can start.

Call a family meeting.

Set up a town hall.

Schedule a lunch and learn.

Be obnoxious about it.

This is your time to shine for good, SEO Twitter.

2. Befriend a Journalist

Extend an offer for your professional insights.

Send them the link for Google’s SEO Skills for Journalists course.

Re-extend your offer again with the knowledge that Google’s course bolsters that offer’s perceived value.

Ask yourself a few questions about what you’ve read or heard.

You use marketing tactics for a living.

It’s time to recognize when they’re being used on you.

4. Vet Contributors & Sources

You wouldn’t want to link to the next Dr. Bukkake, would you?

You’ll learn time-saving methods to verify the authenticity and accuracy of images, videos, and reports.

5. Talk to Your C-Level, Stakeholders & Security Teams About Data Voids

Does your team have a response plan for a data void exploit?

If not, are they prepared to accept Google’s passive Darwinism strategy?

Eventually, information will catch up to the manipulation.

But how long will that take?

What is the monetary impact of the conspiratorial association? (If you create a plan, please share it with me.)

6. Take Security Seriously

At a minimum, enable 2-step verification for yourself and all company accounts.

If you are a public figure or are a person who is critical to elections, consider enrolling in Google’s Advanced Protection Program.

Learn how to spot phishing and test your knowledge with Jigsaw’s Phishing Quiz.

If your password is older than six months or you’ve used the password on multiple sites, go update it.

Now.

I’m not kidding.

This article can wait.

7. Implement Fact-Checking Markup

Not prioritized in your workflow?

Google now uses BERT to match stories with fact checks.

Include a link to the Fact Checkup Markup Tool for a faster turn around on any development work required.

8. Learn How to Create Content on Falsehoods Without Amplifying Them

In December 2019, the American Press Institute held a two-day summit with the goal of defining strategies for truth-telling in a time of misinformation and polarization.

Tactics include being strategic on when to cover the issue. A hot trending topic can be tempting to jump on but it also can lend validity.

If you create content about bad information, start and end the piece with a factual statement – a “truth sandwich.”

API’s strategic report includes ways to address criticism, correct mistakes, and other critical new skills required for content creation.

9. Be Kind to One Another

Anger, anxiety, and distrust are the goals of misinformation.

You might not be able to change Uncle Jim’s mind about politics, you can change how he views conversations with those who hold opposing beliefs.

This is powerful.

Civil, compassionate discourse disrupts the “it’s us vs. them” narrative.

There is no happy path.

There are no easy answers.

The good news is that is exactly the sort of riddle that likely lured you into SEO.

You are curious.

You are clever.

This your time to shine.

*My statement about Seal Team Six isn’t true, but didn’t you want to believe it? Verbum vincent, my friends.

All screenshots taken by author, September 2020